|

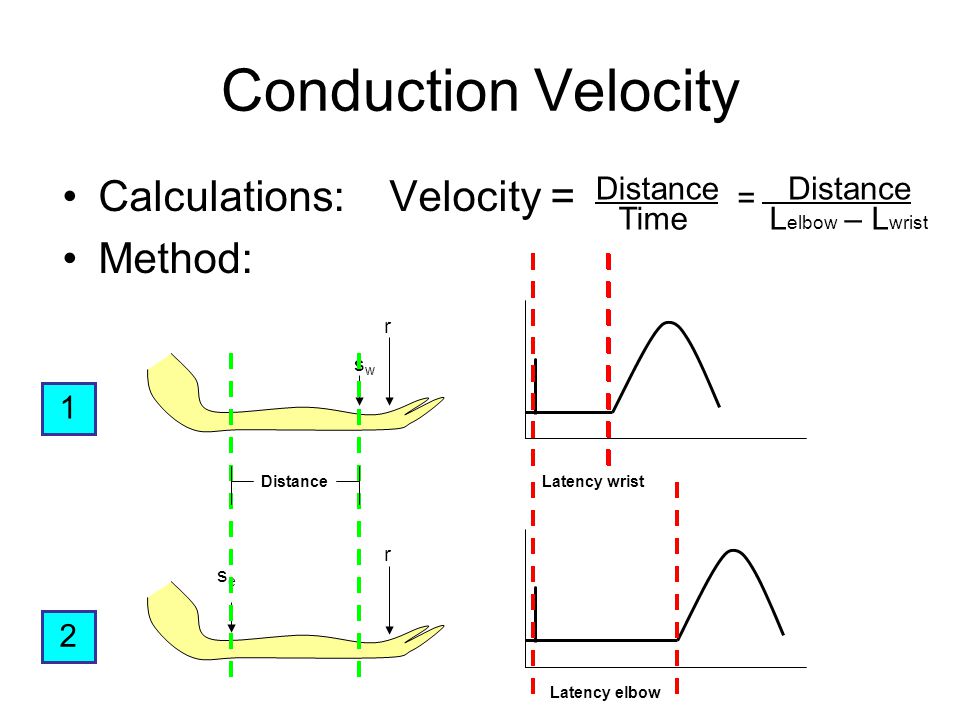

Latency is a time interval between the stimulation and response, or, from a more general point of view, a time delay between the cause and the effect of some physical change in the system being observed.[1] Latency is physically a consequence of the limited velocity with which any physical interaction can propagate. The magnitude of this velocity is always less than or equal to the speed of light. Therefore, every physical system with any physical separation (distance) between cause and effect will experience some sort of latency, regardless of the nature of stimulation that it has been exposed to.

The precise definition of latency depends on the system being observed and the nature of stimulation. In communications, the lower limit of latency is determined by the medium being used for communications. In reliable two-way communication systems, latency limits the maximum rate that information can be transmitted, as there is often a limit on the amount of information that is 'in-flight' at any one moment. In the field of human–machine interaction, perceptible latency has a strong effect on user satisfaction and usability.

Communication latency[edit]

Online games are sensitive to latency (or 'lag'), since fast response times to new events occurring during a game session are rewarded while slow response times may carry penalties. Due to a delay in transmission of game events, a player with a high latency internet connection may show slow responses in spite of appropriate reaction time. This gives players with low latency connections a technical advantage.

Minimizing latency is of interest in the capital markets,[2] particularly where algorithmic trading is used to process market updates and turn around orders within milliseconds. Low-latency trading occurs on the networks used by financial institutions to connect to stock exchanges and electronic communication networks (ECNs) to execute financial transactions.[3] Joel Hasbrouck and Gideon Saar (2011) measure latency based on three components: the time it takes for information to reach the trader, execution of the trader’s algorithms to analyze the information and decide a course of action, and the generated action to reach the exchange and get implemented. Hasbrouck and Saar contrast this with the way in which latencies are measured by many trading venues who use much more narrow definitions, such as, the processing delay measured from the entry of the order (at the vendor’s computer) to the transmission of an acknowledgement (from the vendor’s computer).[4] Electronic trading now makes up 60% to 70% of the daily volume on the NYSE and algorithmic trading close to 35%.[5] Trading using computers has developed to the point where millisecond improvements in network speeds offer a competitive advantage for financial institutions.[6]

Packet-switched networks[edit]

Network latency in a packet-switched network is measured as either one-way (the time from the source sending a packet to the destination receiving it), or round-trip delay time (the one-way latency from source to destination plus the one-way latency from the destination back to the source). Round-trip latency is more often quoted, because it can be measured from a single point. Note that round trip latency excludes the amount of time that a destination system spends processing the packet.[citation needed] Many software platforms provide a service called ping that can be used to measure round-trip latency. Ping uses the Internet Control Message Protocol (ICMP) echo request which causes the recipient to send the received packet as an immediate response, thus it provides a rough way of measuring round-trip delay time. Ping cannot perform accurate measurements,[7] principally because ICMP is intended only for diagnostic or control purposes, and differs from real communication protocols such as TCP. Furthermore, routers and internet service providers might apply different traffic shaping policies to different protocols.[8][9] For more accurate measurements it is better to use specific software, for example: hping, Netperf or Iperf.

However, in a non-trivial network, a typical packet will be forwarded over multiple links and gateways, each of which will not begin to forward the packet until it has been completely received. In such a network, the minimal latency is the sum of the transmission delay of each link, plus the forwarding latency of each gateway. In practice, minimal latency also includes queuing and processing delays. Queuing delay occurs when a gateway receives multiple packets from different sources heading towards the same destination. Since typically only one packet can be transmitted at a time, some of the packets must queue for transmission, incurring additional delay. Processing delays are incurred while a gateway determines what to do with a newly received packet. Bufferbloat can also cause increased latency that is an order of magnitude or more. The combination of propagation, serialization, queuing, and processing delays often produces a complex and variable network latency profile.

Latency limits total throughput in reliable two-way communication systems as described by the bandwidth-delay product.

Arma 3 how to holster. 1.2 Quick weapon selectAdds key bindings to quickly switch weapons while on foot or in a vehicle. Overview 1.1 Holster weaponAdds the ability to holster a weapon on the back. 1.3 Quick vehicle engine on/offAdds key bindings to quickly turn a vehicle engine on or off. 1.4 Grenade selectChanges the grenade selection key bindings to help prevent accidents. (Unbound by default).

Fiber optics[edit]

Latency in fiber optics is largely a function of the speed of light, which is 299,792,458 meters/second in vacuum. This would equate to a latency of 3.33 µs for every kilometer of path length. The index of refraction of most fibre optic cables is about 1.5, meaning that light travels about 1.5 times as fast in a vacuum as it does in the cable. This works out to about 5.0 µs of latency for every kilometer. In shorter metro networks, higher latency can be experienced due to extra distance in building risers and cross-connects. To calculate latency of a connection, one has to know the distance traveled by the fibre, which is rarely a straight line, since it has to traverse geographic contours and obstacles, such as roads and railway tracks, as well as other rights-of-way.

Due to imperfections in the fibre, light degrades as it is transmitted through it. For distances of greater than 100 kilometers, amplifiers or regenerators are deployed. Latency introduced by these components needs to be taken into account.

Satellite transmission[edit]

Satellites in geostationary orbits are far enough away from Earth that communication latency becomes significant — about a quarter of a second for a trip from one ground-based transmitter to the satellite and back to another ground-based transmitter; close to half a second for two-way communication from one Earth station to another and then back to the first. Low Earth orbit is sometimes used to cut this delay, at the expense of more complicated satellite tracking on the ground and requiring more satellites in the satellite constellation to ensure continuous coverage.

Audio latency[edit]

Audio latency is the delay between when an audio signal enters and when it emerges from a system. Potential contributors to latency in an audio system include analog-to-digital conversion, buffering, digital signal processing, transmission time, digital-to-analog conversion and the speed of sound in air.

Operational latency[edit]

Any individual workflow within a system of workflows can be subject to some type of operational latency. It may even be the case that an individual system may have more than one type of latency, depending on the type of participant or goal-seeking behavior. This is best illustrated by the following two examples involving air travel.

From the point of view of a passenger, latency can be described as follows. Suppose John Doe flies from London to New York. The latency of his trip is the time it takes him to go from his house in England to the hotel he is staying at in New York. This is independent of the throughput of the London-New York air link – whether there were 100 passengers a day making the trip or 10000, the latency of the trip would remain the same.

From the point of view of flight operations personnel, latency can be entirely different. Consider the staff at the London and New York airports. Only a limited number of planes are able to make the transatlantic journey, so when one lands they must prepare it for the return trip as quickly as possible. It might take, for example:

Assuming the above are done consecutively, minimum plane turnaround time is: Sekiro shadows die twice torrent.

However, cleaning, refueling and loading the cargo can be done at the same time. Passengers can be loaded after cleaning is complete. The reduced latency, then, is:

The people involved in the turnaround are interested only in the time it takes for their individual tasks. When all of the tasks are done at the same time, however, it is possible to reduce the latency to the length of the longest task. If some steps have prerequisites, it becomes more difficult to perform all steps in parallel. In the example above, the requirement to clean the plane before loading passengers results in a minimum latency longer than any single task.

Mechanical latency[edit]

Any mechanical process encounters limitations modeled by Newtonian physics. The behavior of disk drives provides an example of mechanical latency. Here, it is the time seek time for the actuator arm to be positioned above the appropriate track and then rotational latency for the data encoded on a platter to rotate from its current position to a position under the disk read-and-write head.

Computer hardware and operating system latency[edit]

Computers run sets of instructions called a process. In operating systems, the execution of the process can be postponed if other processes are also executing. In addition, the operating system can schedule when to perform the action that the process is commanding. For example, suppose a process commands that a computer card's voltage output be set high-low-high-low and so on at a rate of 1000 Hz. The operating system may choose to adjust the scheduling of each transition (high-low or low-high) based on an internal clock. The latency is the delay between the process instruction commanding the transition and the hardware actually transitioning the voltage from high to low or low to high.

On Microsoft Windows, it appears[original research?] that the timing of commands to hardware is not exact. Empirical data suggest that Windows (using the Windows sleep timer which accepts millisecond sleep times) will schedule on a 1024 Hz clock and will delay 24 of 1024 transitions per second to make an average of 1000 Hz for the update rate.[citation needed] This can have serious ramifications for discrete-time algorithms that rely on fairly consistent timing between updates such as those found in control theory. The sleep function or similar windows API were at no point designed for accurate timing purposes. Certain multimedia-oriented API routines like

timeGetTime() and its siblings provide better timing consistency. However, consumer- and server-grade Windows (as of 2011 those based on NT kernel) were not to be real-time operating systems. Drastically more accurate timings could be achieved by using dedicated hardware extensions and control-loop cards.

Linux may have the same problems with scheduling of hardware I/O.[citation needed] The problem in Linux is mitigated by support for posix real-time extensions, and the possibility of using a kernel with the PREEMPT_RT patch applied.

On embedded systems, the real-time execution of instructions is often supported by the low-level embedded operating system.

In simulators and simulation[edit]

In simulation applications, 'latency' refers to the time delay, normally measured in milliseconds (1/1,000 sec), between initial input and an output clearly discernible to the simulator trainee or simulator subject. Latency is sometimes also called transport delay.

See also[edit]References[edit]

External links[edit]

Retrieved from 'https://en.wikipedia.org/w/index.php?title=Latency_(engineering)&oldid=897823178'

I know most of the network latency for short distances is due to router processing times. But for longer distances the speed of light also counts. And it's different from the speed the light in the vacuum. What is it?

Hennes

59.7k77 gold badges9494 silver badges144144 bronze badges

Jader DiasJader Dias

7,4964747 gold badges128128 silver badges183183 bronze badges

2 Answers

A typical index of refraction for optical fiber is 1.62, therefore the speed of light in a fiber is approximately 3e8 m/s / 1.62 = 1.85e8 m/s. Therefore it would take at least 1000000 m /1.85e8 m/s = 0.0054 s to travel that distance. Note that this value doesn't cover the extra distance traveled by the light from bouncing side to side.

Ignacio Vazquez-AbramsIgnacio Vazquez-Abrams

97.7k77 gold badges159159 silver badges216216 bronze badges

Distance Delay is simply the minimum amount of time that it takes the electrical signals that represent bits to travel down the physical wire. Optical cable sends bits at about ~5.5 µs/km, copper cable sends it at ~5.606 µs/km, and satellite sends bits at ~3.3 µs/km. (There are a few additional microseconds of delay from amplifying repeaters in optical cable, but compared to distance, the delay is negligible.)

source: http://www.networkperformancedaily.com/2008/06/latency_and_jitter_1.html

7,4964747 gold badges128128 silver badges183183 bronze badges

Not the answer you're looking for? Browse other questions tagged networkinglatencyfiberoptical-cable or ask your own question.

If you want to implement disaster recovery, latency and throughput between your primary and secondary location are key factors. These determine how quickly and how much data you can transport to the secondary location, and hence how much data will be lost in case of failure of the primary location. More formally, the latency and throughput determine the possible recovery point objective. In this post I will walk through measuring latency and throughput between Azure regions, so you can determine the best configuration for your scenario.

Latency is determined by the speed of light and the number of infrastructure components between the source and destination. This means that the closer the primary and secondary location are, the lower the latency. The catch is that if the primary and secondary location are too close together, they could potentially be hit by the same (natural) disaster. When Microsoft picks locations for Azure regions, they are selected to be a safe distance apart for most major disasters (earthquake, flood, and so on).

Options for connecting virtual networks in different regions

In Azure there are three ways to connect virtual networks in different regions:

In this post I will focus on VPN and virtual network peering, but you could use the same method to measure latency and throughput in a configuration with ExpressRoute. Before I go into how you measure latency and throughput, let’s look at the architectural differences between the three options.

VPN

With VPN, you link two virtual networks together by deploying a VPN gateway in both virtual networks, and then set up a connection between the two, as shown below.

Diagram 1: Virtual networks VNET1 and VNET2 in different Azure regions connected via VPN Cross-region virtual network peering

The downside of using a VPN is the need for VPN gateways, which incur cost, add latency, and limit bandwidth. Within a region, you can use virtual network peering instead, which connects virtual networks without a gateway. Cross-region virtual network peering recently went into preview, and this allows you to securely connect virtual networks across regions, effectively stretching your network across regions in Azure, as shown below.

Diagram 2: Virtual networks VNET1 and VNET2 in different Azure regions connected via virtual network peering (preview) Express Route

ExpressRoute is a dedicated private connection from your on-premises location to Azure (and Office 365) data centers. You can link virtual networks to ExpressRoute, and with the ExpressRoute Premium feature you can link virtual networks from multiple regions, as shown below.

Calculate Latency By Distance LearningDiagram 3: Virtual networks VNET1 and VNET2 in different Azure regions connected via ExpressRoute

When you connect two virtual networks in different Azure regions with VPN or virtual network peering, the traffic between the two regions runs over the Microsoft backbone, and the traffic is routed as any normal IP-based traffic. Although we currently don’t offer an SLA on latency and throughput, the fact that the traffic stays within our backbone means latency and throughput typically stay within a certain range.

When using ExpressRoute (with the Premium Add On) this is mostly the same, but there is a subtle difference: the virtual networks aren’t connected directly. Each virtual network is connected to the same Microsoft Edge your Partner Edge is connected to. In most cases that won’t make a lot of difference, but I’ll illustrate the impact through an extreme case. Suppose your on-premises location in Australia is connected via ExpressRoute, and terminates at a Microsoft Edge in Australia. If you connect virtual networks in West Europe and North Europe to this ExpressRoute, the traffic between the virtual networks will now run over the Microsoft Edge in Australia.

Creating two virtual networks connected by VPN

Creating two virtual networks in different regions that are connected through VPN is quite easy using this ARM template from the Azure QuickStarts. You can download my parameter file here, so you can see what kind of values you need to fill in. I used IP ranges for the virtual networks as shown in diagram 1. Note that I used IP address ranges that don’t overlap. Also, the VPN VIPs will be different, as these are automatically assigned.

Deploying the template will take a while, mostly due to the VPN gateway configuration. You can continue with the next steps in the setup once the virtual networks have been created. You don’t have to wait for the VPN gateway configuration to finish.

Adding virtual machines

To measure latency and throughput, you need a virtual machine in both virtual networks. For the tests I’m using Windows Server 2016 on a D3_v2 virtual machine. A D3_v2 virtual machne has 4 CPUs, which may seem like overkill. However, the size of your virtual machine impacts the network bandwidth assigned to the virtual machine. By choosing a larger virtual machine I’m making sure I have some bandwidth to play with. Of course, if you are targeting a certain size machine for your workload, it makes sense to use that size to test with.

Note: you could select accelerated networking when deploying the virtual machine, but that feature mainly impacts networking within a single region.

Once the virtual machines are created, each has an internal IP address. If you used the subnet configuration I used, and deployed in Subnet-1 in each region, the internal IP addresses are 10.10.1.4 for the West Central US virtual network and 10.20.1.4 for the US West 2 virtual network.

Go to the first virtual machine in the portal, and connect to it with remote desktop protocol (RDP). Once the VPN gateway configuration is finished, you can test connectivity by using RDP to connect from the first virtual machine to the second virtual machine on the internal IP address.

Measuring latency and throughput

The easiest way to measure latency and throughput is using PsPing. You can download PsPing from here. PsPing can be used for simple ping functionality without a server, but for latency and bandwidth tests you need to setup a server.

Start the PsPing server

Run the PsPing client

This will get you a measurement over VPN, but for reference we also want a measurement over the public IP address, bypassing the VPN. To enable this, you need to modify the network security group on the virtual machine running the server, so it doesn’t block traffic over the port PsPing is listening to (port 81 in my case). It also makes sense to use different size blocks, to see how that affects the latency.

To test the bandwidth, you can use the -b parameter with PsPing, as follows:

Psping -b -l <message size> -n <number of messages> -f <ipaddress>:<port>, for example, psping -l 8k -n 1000 -f 10.20.1.4:81 to send 1000 messages of 8 kilobytes.

Public IP vs. VPN gateway

The table below shows the differences between going over the public IP address versus going through the VPN gateway.

Disclaimer: Measurement results will vary between different Azure regions, time of day, and other factors. The maximum value, in particular, varies greatly from test to test. Please test thoroughly to understand the impact on your scenario.

With the small packet size the difference is only a few milliseconds on average, but the maximum latency may vary. The increased latency with a VPN gateway is caused by the additional hop of going over the gateway. The difference between connecting over the public IP address and through the VPN gateway becomes significant with larger requests. This is due to the bandwidth limitations of the VPN gateway. I used the Basic SKU, which has the least bandwidth and using a more powerful SKU (see About VPN Gateway) will likely have positive impact.

Note: For maximum throughput and minimum latency you can you can turn of encryption of the traffic between two virtual networks connected using VPN gateways. Do not use this unless you understand the security implications.

Measuring with cross-region virtual network peering

With the tests over the public IP and through the VPN done, it’s time to move on to cross-region peering. You could setup two entirely new virtual networks and virtual machines, but you can also remove the connection between the VPN gateways, and then setup virtual network peering. You don’t have to delete the VPN gateways, but you can. To remove the connections, take the following steps:

To configure virtual network peering, take the following steps:

Public IP vs. cross-region virtual network peering

The table below shows the differences between going over the public IP address versus going through virtual network peering.

The difference between public IP and cross-region virtual network peering is only a few microseconds, and as such negligible. This makes sense, as the traffic pretty much takes the same physical route through the Microsoft backbone.

Conclusion

Using PsPing you can measure the latency and bandwidth characteristics between different regions. This will help you determine the best options for scenarios such as disaster recovery, and how you should configure synchronization between systems in different regions.

Cross-region virtual network peering is a great addition to Azure networking. It provides lower latency and higher throughput than connections over VPN gateway. Once it becomes generally available, it will be the preferred method to peer networks in different regions.

--- Thanks to Steffen Vorein and RoAnn Corbisier for editing. ---

I have a link between a host and a switch.

The link has a bandwidth & a latency. How to calculate the time of 2 packets(with size 1KB) to be transferred from Host A to Switch 1?

Here's the diagram(I am talking about the first link)

Note: I just want to calculate it manually for these values, I want to know the principles/laws of calculating these problems.

Carlos

3,51355 gold badges3838 silver badges7272 bronze badges

MhdSyrwanMhdSyrwan

90533 gold badges1313 silver badges2323 bronze badges

2 Answers Formulas:

Variable decoder:

Applying to your question:

I will only calculate information for the link between Host A and Switch 1:

Mike PenningtonMike Pennington

31.2k1212 gold badges110110 silver badges148148 bronze badges

Quite roughly, the formula is:

Bottom line:

Notice that I converted all units to bytes and seconds, so calculations are meaningful. Prafulla Kumar Sahu

This is more accurate for large packets. For small packets, real numbers are larger.

4,32066 gold badges2424 silver badges5252 bronze badges

ugorenugoren

13.7k11 gold badge2424 silver badges5151 bronze badges

Not the answer you're looking for? Browse other questions tagged networkingcommunicationlatencyswitchingpackets or ask your own question.Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed